The landscape of college admissions is shifting rapidly, and institutions are increasingly turning to advanced data solutions to stay competitive. At the forefront of this shift are ai-powered enrollment platforms for colleges.

But what exactly are these platforms? In higher education, an AI-powered enrollment system is a comprehensive, intelligent data ecosystem that leverages machine learning and predictive analytics to streamline the entire student admissions journey—from the first inquiry to the last day of classes. Rather than simply acting as a digital filing cabinet, these platforms actively analyze applicant data, automate routine outreach, predict enrollment likelihood, and personalize the prospect experience at scale.

Empowering the Admissions Office: Who Uses These Tools?

To get the most out of predictive modeling and automation, it’s essential to understand who interacts with these platforms daily and how they can best be utilized.

Admissions Counselors and Recruiters

The primary users of ai-powered enrollment tools for admissions staff are the counselors on the front lines. Historically, these professionals have spent countless hours answering routine questions, manually logging emails, and sorting through unqualified leads.

When implemented correctly, ai-driven enrollment platforms support admissions staff high-yield activities. By delegating the repetitive tasks (like initial chatbot conversations or automated document reminders) to the AI, counselors can dedicate their time to high-value interactions: conducting personal campus tours, having meaningful one-on-one conversations with prospective students, and closing the gap on yield rates.

Enrollment Leadership and Deans

For Directors of Admissions and VPs of Enrollment Management, these platforms act as the ultimate strategic dashboard. Leaders use the AI’s predictive modeling to forecast class sizes, track the health of the admissions funnel, and allocate marketing budgets more effectively. To get the best results, leadership should focus heavily on ai enrollment workflows customization, ensuring the AI’s scoring models are trained specifically on the institution’s historical enrollment patterns rather than generic industry averages.

Finding the Right Fit for Every Campus

Advanced capabilities aren’t just for large universities. In fact, some of the best ai-powered enrollment systems for small colleges provide immense value by acting as a force multiplier for leaner teams, allowing them to provide a highly personalized touch to their applicant pool without needing to double their headcount.

Scale and Strategy: Small Colleges vs. Large Universities

While the underlying technology is the same, how it’s deployed depends on the size of your campus. Both require AI to stay competitive, but for different strategic reasons:

- Small Colleges (The Force Multiplier): With leaner teams, small schools use AI to ensure no inquiry is missed. It automates administrative tasks so staff can focus on their greatest competitive advantage: deep, personal relationships with every applicant.

- Large Universities (The Data Navigator): For institutions managing massive applicant pools, AI provides essential triage. It scores thousands of files instantly and routes data across complex departments, ensuring high-yield prospects don’t get lost in the digital noise.

Whether you are scaling a personal touch or managing a data deluge, specialized ai enrollment workflows customization ensures the platform serves your specific mission.

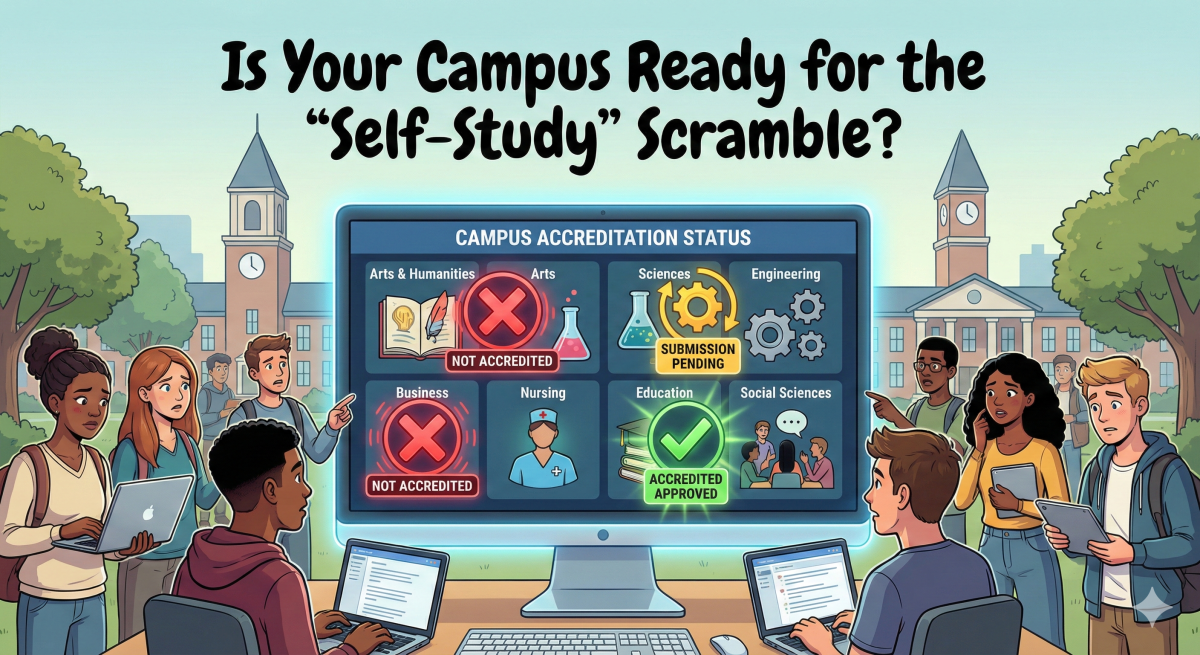

The Key to Success: Eliminating Data Silos

The greatest AI tool in the world is useless if it cannot communicate with the rest of your campus technology. Seamless ai-powered enrollment platforms integration with student information systems (SIS) is the critical bridge that makes these tools work.

When your enrollment AI and your SIS speak the same language, admissions teams can ensure a single, accurate source of truth. As soon as an applicant submits an application, that information automatically updates in the SIS, triggering financial aid packaging, housing assignments, and course registration workflows without manual data entry.

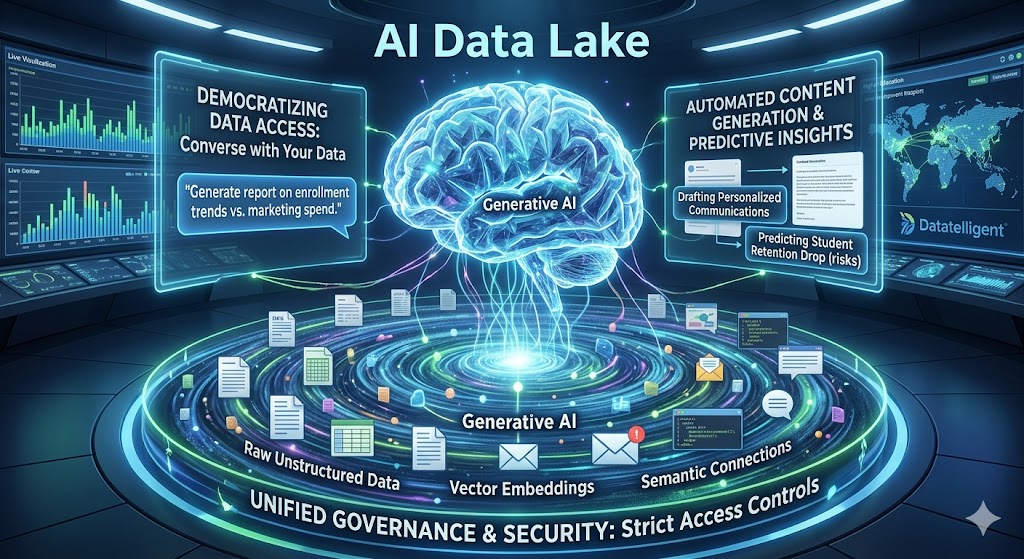

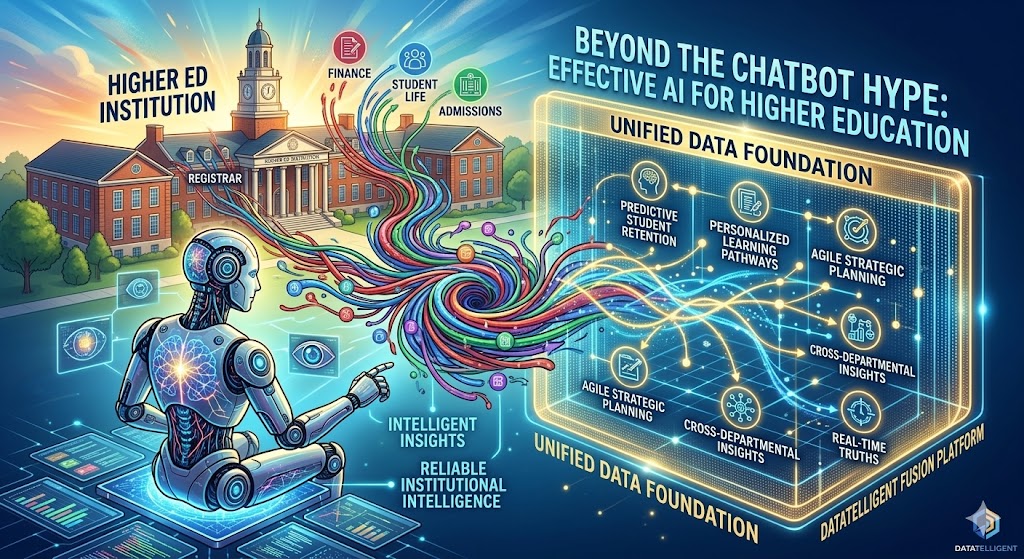

Giving Data a Voice with Datatelligent’s Fusion Platform

For institutions looking to adopt a true higher-education-first data strategy, solving the complex web of integrations, dashboards, and automated information pulling can be daunting. That is where Datatelligent steps in.

Our Fusion Platform is purpose-built for higher education, designed to break down departmental silos and turn raw admissions data into actionable, predictive insights. Whether you need to connect a fragmented SIS, build intuitive enrollment dashboards for your leadership team, or deploy custom machine learning models to predict student melt, the Fusion Platform handles the heavy lifting.

We ensure that your enrollment workflows are highly customized and fully integrated, empowering your staff to focus on what they do best: building relationships with future students.

Ready to transform your admissions process? Learn more about how our AI models and solutions can integrate with your enrollment ecosystem and drive your institution’s growth.